This is beautiful.

I have always been interested in the space flights of the astronaughts. I am sure that I join millions of others who feel the same.

So this article by Deana L. Weibel, Professor of Anthropology at Grand Valley State University is terrific.

ooOOoo

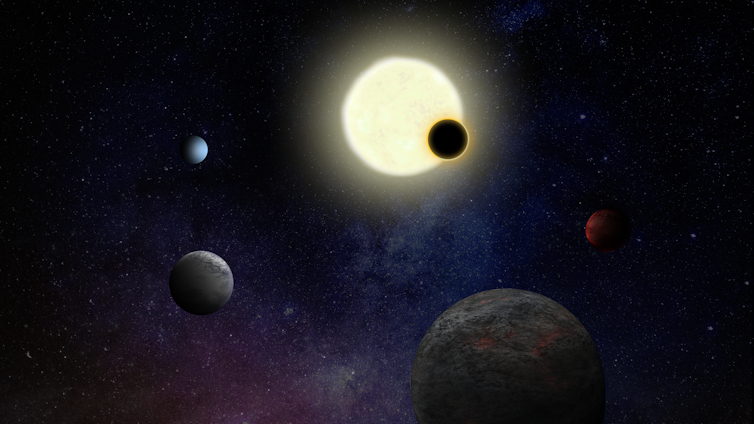

Seeing an eclipse from Earth is awe‑inspiring – for astronauts seeing one from space, the scene was even more grand

Deana L. Weibel, Grand Valley State University

The astronauts on Artemis II’s trip to the Moon in April 2026 didn’t just have an amazing journey through space. They also saw something extraordinary. They were the first humans to see a total solar eclipse from space.

A solar eclipse happens when the Moon moves in front of the Sun. In a total eclipse, the Sun’s central disc is covered completely.

From Earth, the circle of the Sun is about the same size as the circle of the Moon. With the bright circle blocked, you can see the undulating rays of the Sun’s corona, or outer atmosphere, that are normally too dim to be observed.

I’m a cultural anthropologist who studies awe-inspiring aspects of space exploration. I have been lucky enough to have seen two total solar eclipses. The first one was in Nebraska in 2017, the second in Indiana in 2024.

During my second total eclipse, the period of totality – that short span when you can remove your protective glasses and look directly at the eclipse – lasted close to 4 minutes. I saw waves of diffuse light snaking around an ink-black hole in the sky. It looked very wrong – almost alien.

On Aug. 12, 2026, there will be another total solar eclipse, visible only from Greenland, Iceland, Spain and the Balearic Islands of the Mediterranean. Some fortunate viewers in Spain and nearby islands may see the eclipse just before sunset, low on the horizon. The Moon illusion, a phenomenon where the Moon looks bigger when it’s near the horizon, might make this eclipse look unusually large.

Unusual eclipse perspectives

Astronauts will occasionally also have less common eclipse experiences. I interviewed one I call by the pseudonym “Jackie” in my research about astronauts’ experiences of awe. She was part of an astronaut training group that did a flight exercise during a total solar eclipse.

Jackie and her squad flew their jets in the shadow of the Moon. This lengthened their time in totality because they could follow and stay within the shadow. Jackie was most impressed with how the Sun’s corona seemed to shift and ripple.

“It’s not static … it’s alive,” she told me.

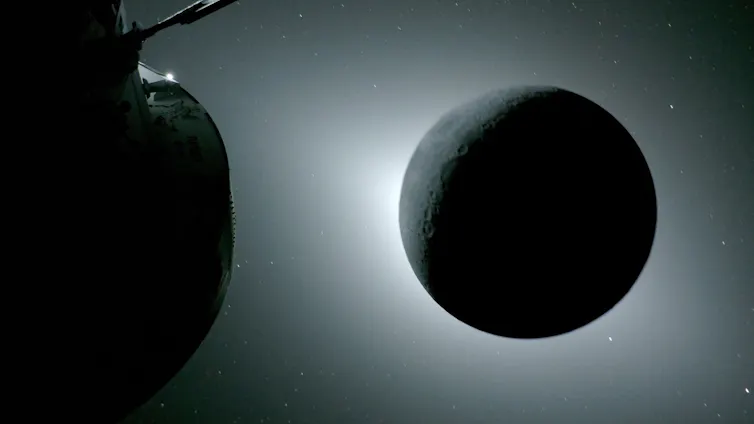

On April 6, 2026, the astronauts of NASA’s Artemis II mission saw another kind of unusual eclipse as they flew around the Moon. At one point during their flight, the Moon and the spacecraft aligned so that the Moon was directly between them and the Sun, blocking the Sun’s disk in a way that looks very different from what we see on Earth.

Astronaut Victor Glover said it felt like they “just went sci-fi.” https://www.youtube.com/embed/YLjPci5bo1k?wmode=transparent&start=0 ‘An impressive sight’: The Artemis II crew were the first humans to observe a solar eclipse from near the Moon.

The astronauts were so close to the Moon that the Moon looked bigger than the Sun and hid more of its bright circle. Earth was also in view, and sunlight reflected from the Earth onto the Moon in a phenomenon NASA calls “earthshine.” This dim light is very similar to the moonlight that shines on the Earth at night.

Imagine the Sun hidden behind the Moon, creating a hazy halo around the Moon’s edges. At the same time, faint light reflected from Earth softly illuminates the Moon, revealing mountains and craters in a dim twilight. Now imagine this striking scene lasting 54 minutes.

This sight was, without a doubt, one of the most unusual eclipses ever seen by human eyes.

Although Artemis’ astronauts are trained to think scientifically, this experience propelled them into a state of awe. They talked openly about how their brains were “not processing” what they observed. While NASA kept them busy with a variety of tasks, the sound of emotion and excitement in their voices as they broadcast live from their lunar flyby was unmistakable.

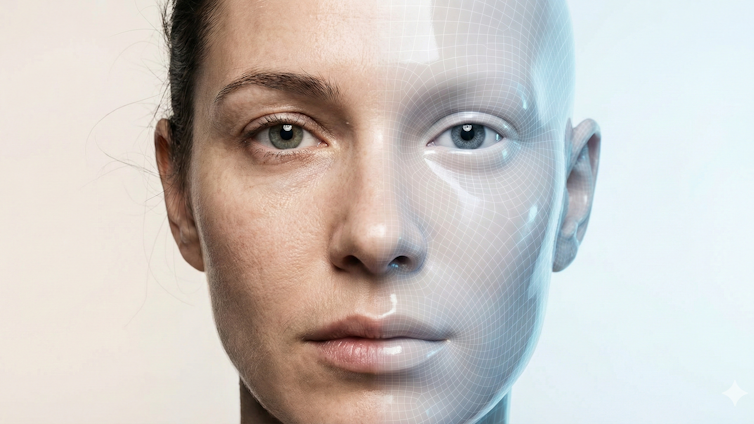

The psychology of awe

Researchers have studied the effects of awe on the human brain, including awe felt during solar eclipses. Moments of wonder like these can transform how you feel and even how you think, making you more thoughtful and open-minded.

In my own work I’ve found these experiences can change how astronauts understand their own place in the universe.

One astronaut said she gained an awareness of the fragility of our planet that now shapes everything she does, while another described becoming more curious after returning to Earth. A third said the awe he experienced in lunar orbit changed his understanding of time and infinity.

Space travel creates many opportunities for awe, but a solar eclipse from behind the Moon, as Mission Commander Reid Wiseman put it, required “20 new superlatives.”

It’s an experience most of the earthbound eclipse-chasers heading to Greenland or Iceland or Spain this summer will only dream about. Whether eclipses happen in space or on Earth, though, close encounters with the grandeur of our universe can make you feel profoundly human.

Deana L. Weibel, Professor of Anthropology, Grand Valley State University

This article is republished from The Conversation under a Creative Commons license. Read the original article.

ooOOoo

In this difficuly world at present, this is a perfect article. As was written, “…. the awe he experienced in lunar orbit changed his understanding of time and infinity.“